Common Management Errors (126)

Cxxvi) Not understanding how conduct experiments (tests, pilot projects, trial run, etc)

Introduction

New ideas are regularly introduced without the appropriate testing, ie you act on a hunch.

Need to adopt a scientifically-sound approach to test innovations, etc; there is software to help. Ideally it should involve randomised testing with a control group so that you adopt a 'test and learn' culture.

If using a sample to conduct the test on, ensure that the sample is representative of the population that you are interested in.

You need to ensure statistically-valid results that can stand up to investigative vigour.

Generally testing is more appropriate for tactical decisions, like choosing a new store format, new product or service, etc rather than strategic ones like considering a merger, acquisition, etc.

Setting up an experiment

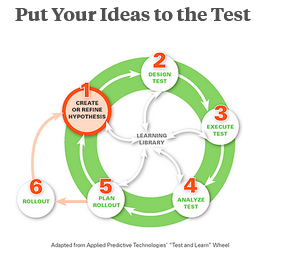

The steps involved in conducting an experiment:

i) define a testable hypothesis

ii) design the test

iii) implement the test

iv) analyse the test data

v) based on data analysis, determine appropriate actions

vi) record learnings for future benefit.

(source: Thomas Davenport, 2009)

Expanding on each of the 6 steps

1. Define a testable hypothesis, ie expectations (use the following questions to help create your hypothesis:

"...- Can you measure what happens when you make the change? (You need to be able to 'pass or fail' the test, based on your hypothesis.)

- Does it fit with your team and organisation's overall strategy, goals and values?

- Does it add value to what you do?..."

MTCT, 2024b

If any answer is 'no', you need to modify your hypothesis or consider not performing the experiment.

Finally, you need to identify what success looks like as a basis to measure the success of your experiment.

2. Design the test (think about how you are going to conduct your test and outline what you will be testing and how long it will take; this includes selecting a control group, identifying sites or units to be tested and defining the test and control situation; some examples:

- testing a new product or process (run the experiments for a specific time and use the metrics identified in the previous step to evaluate its success)

- if modifying an existing product or process, you need to have a control group and a treatment group, ie A/B split testing; need to minimise the number of variables in the control and treatment groups, ideally the difference between control and treatment is one variable.

"...your control group is what you use as a baseline measure. Try to avoid making any changes with this group during the experiment. Do the same with your treatment group, except for the change you want to test. You can then compare your control group with your treatment group to test your hypothesis..."

MTCT, 2024b

Sometimes it is not possible to set up a control group. If this happens, you can carefully select past data as your control group; use this data to compare with the treatment group. An example of a store with only one site: compare sales this year with sales from the same period last year.

Some tips

a) if using a control and treatment groups, test one factor at a time

b) don't underestimate the amount of effort and resources required to design and conduct a test

c) understand the risks associated with the test (use risk analysis and management. Risk consists of 2 elements, ie

probability of something going wrong and the negative consequences if this happens. The following steps are involved in risk analysis

i) risk identification (identify potential threats, etc)

ii) risk measurement (magnitude and likelihood of the risk's impacts, etc)

iii) risk mitigation (develop strategies to reduce or eliminate the risk, etc)

` iv) reporting and monitoring risk (recording details of learnings, etc)

v) risk governance (develop a framework that involves defining roles, segregating duties, assigning authority, responsibilities and accountabilities plus overseeing risk-related matters at all levels within the organisation; for the framework to be effective, it needs to have active participation and adherence of all stakeholders.)

c) share the risk and potential gains with other stakeholders

d) accept the risk (especially if there is nothing you can do to prevent or mitigate the risk and/or potential loss is less than the cost of insuring against the risk and/or potential gain is worth the risk, eg potential sales will exceed the costs)

e) control the risk (if you choose to accept the risk, you need to reduce its impact, like testing it, ie conducting activity on a small-scale and in a controlled way; this will help you find preventative and detective actions before you implement on a larger scale:

- preventive action (aimed to prevent the risk developing such as incorporating firewall protection on corporate servers, health and safety training, etc)

- detective action (involves identifying the points in a process where something could go wrong and having steps ready to fix the problem promptly if it occurs, eg double checking reports, conducting safety checks before a product is released, installing sensors to detect product defects, etc.

Summary of risk analysis

"...Risk analysis is a proven way of identifying and assessing factors that can negatively affect the success of a business or project. It allows you to examine the risks that you or your organisation face, and helps you decide whether or not to move forward with the decision.

You perform a risk analysis by identifying threats, and estimating the likelihood of those threats being realised.

Once you work out the value of the risk you face, you can start looking at ways to manage risk effectively. This may include choosing to avoid the risk, sharing it, or accepting it while reducing its impact. Not only can this help you make sensible decisions but it can also alleviate feelings of stress and anxiety.

It is essential that when you're working through your risk analysis, and that you're aware of all the possible impacts of the risk revealed. This includes being mindful of cost, ethics, and people safety..."

MTCT, 2024c

3. Implement the test (once designed, implement the test; inform the impacted stakeholders why it is taking place and how you will be conducting it; continue to monitor and evaluate performance of the test, especially be aware of external factors that could impact the test; need to ensure that stakeholders who are impacted negatively by the test are suitably compensated.)

4. Analyse the test data (start by comparing actual performance against your hypothesis:

"...- What was supposed to happen and what did happen?

- Why was there a difference?

- Was the experiment a success? (Don't be too disappointed if it wasn't!)

- what unexpected consequences happened as a result of your experiment? How could you manage or take advantage of these?..."

MTCT, 2024b

NB Need to think about what you have learned from the test as a basis to helping make future tests more beneficial.

Use statistical analysis to evaluate the results of the test. Some well-known statistical tests include:

- Analysis of variance (ANOVA)

- Chi-squared test

- Correlation

- Factor analysis

- Mann-Whitney U

- Mean squared weighted deviation (MSWD)

- Pearson product-moment correlation coefficient

- Regression analysis

- Spearman rank correlation coefficient

- Student's t-test

- Timeseries analysis

- Conjoint analysis

(main source: Wikipedia, 2024)

5. Based on data analysis determine appropriate actions (

"...Sometimes, you can implement what you've learnt while you continue to conduct future experiments. Other times, you may have to conduct follow-up experiments before you can understand the best way forward..."

MTCT, 2024b)

6. Record learnings for future benefit (should record all lessons learnt from each time tests conducted; provide a good reference for how to handle future tests.

"...think about how you can use what you have learned. Would conducting future experiments help you to get more even value from your idea?..."

MTCT, 2024b)

Need to build up capacity for testing

This involves establishing a standard process, ie appropriate infrastructure, plus

"...Training program to hone competencies, software to structure and analyse the tests as a means of capturing learning, a process for deciding when to repeat tests, and a central organisation to provide expert support for all above..."

Thomas Davenport, 2009

Areas of concern

- sample is not representative of the population

- inappropriate control group

- not randomising selection

- changing design during experiment

- desired outcomes not defined and measurable, ie customer satisfaction and employee engagement are harder to measure that sales and conversion-rate

- not capturing the learnings from the experimentation

- retesting timeline, ie know when a test is obsolete

- organisation lacks a testing mindset or culture, ie

"...no major change in tactics should be adopted without being tested by people who understand testing..."

Thomas Davenport, 2009

- keep it as simple as possible

"...the more complex the experiment, the more it will cost to do, the more risks it will involve, and the more time it will take to analyse the results. However, you need to make sure that your experiment will provide meaningful data..."

MTCT, 2024b

Some examples (3)

- Capital One (credit card industry)

Capital One uses an information-based strategy:

"...the company has become one of the world's most aggressive testers since 1988......the firm was founded on the concept......ability to turn our business into a scientific laboratory where every decision about product design, marketing, sales communication, credit lines, customer selection, collection policies and cross-selling decisions could be subject to systematic testing using thousands of experiments......and it paid off: the company became the fifth-largest provider of credit cards in the United States..."

Thomas Davenport, 2009

- CKE restaurants (quick-service restaurant chains)

"...process of new product introduction calls for rigourous testing at a certain stage. It starts with brainstorming, in which several cross-functional groups develop a variety of new product ideas. Only some of them make it past the next phase, judgmental screening, during which a group of marketing, product development, and operations people will evaluate ideas based on experience and intuition. Those that make the cut are actually developed and then tested in stores, with well-defined measures and control groups. At this point, executives decide whether to roll out a product system-wide, modify it for retesting or kill the whole idea..."

Thomas Davenport, 2009

Based on the above approach, CKE has a success rate of 1 in 4 new products, while the industry average is 1 in 50 or 60 new consumer products

- eBay (online wholesaler and retailer)

Randomised testing is a key component of making website changes and it is relatively easy to perform randomised tests of website variations; they follow a well-defined process entitled 'eBay experimentation platform':

"...- Hypothesis development

- Design of the experiment: determining test samples, experimental treatments, and other factors

- Set up experiment: assessing costs, determining how to prototype, ensuring fit with site's performance. (For example, making sure the testing doesn't slow down user response time)

- Launching of the experiment: figure out how long to run it......

- Tracking and monitoring

- Analyse and results..."

Thomas Davenport, 2009

In addition to online testing, extensive off-line testing happens via lab studies, home visits, participatory design sessions, focus groups, trade-off analysis of website features, qualitative visual-design research, eye-tracking studies, diary studies, etc.

Summary

The test can vary from very basic to complex projects, including prototype products or services.

To conduct an effective test you need to do the following:

- create a hypothesis

- design your test

- carry out your test

- analyse results and o the necessary follow-up.

Need to institutionalise the process of doing and reviewing test.

"...formalised testing can provide a level of understanding about what really works......Thanks to new, broadly available software and given some straightforward investments to build capabilities, managers can now base consequential decisions on scientifically valid experiments..."

Thomas Davenport, 2009

Furthermore, the appropriate train staff

"...can oversee the process, assisted by software that will help determine what kind of samples are necessary, which sites to use the testing and controls, and whether any changes resulting from experiments are statistically significant..."

Thomas Davenport, 2009

"...generally speaking, the triumphs of testing occurred in strategy execution, not strategy formulation..."

Thomas Davenport, 2009

"...testing...... needs to come out of a laboratory and into the boardroom. The key challenges are no longer technological or analytical; they have more to do with simply making managers familiar with the concepts and the process. Testing, and learning from testing, should become central to any organisation's decision-making. The principles of scientific method work as well in business as they do in other sector of life. It's time to replace 'I'll bet' with 'I know'..."

Thomas Davenport, 2009